Apple Vision Pro

I wasn’t expecting this to be my first blog post, but giving the timing of the Apple Vision Pro launch, I couldn’t resist the desire to share some of my many thoughts. I don’t want to repeat what many smart people before me have already stated, so I’ll start by calling out a few perspectives that I’ve borrowed from others and support:

AR is better. Augmented reality, with the ability to transition to full immersion, was absolutely the right way to design this type of product. The fact that you’re still present in your environment, along with the ability to interact with just your eyes and subtle hand gestures, will lead to an absolutely magical experience.

Borrowed from people who have tried it - Ben Thompson, John Gruber, Marques Brownlee (video link)

Trust that it’ll get better. We should have faith that the hardware will get slimmer and cheaper, the apps will come, and it will be an overall success. It’s not smart to bet against a $3 trillion company throwing its full weight behind a technology like this, especially with Apple’s track record.

Borrowed from Haje Kemps - TechCrunch (link to article)

Replacing the Mac. Success for this product will mean moving AR/VR headsets from novelty devices to the go-to device for content consumption and productivity. This means that if all goes well it will eventually replace the Mac for Apple.

Borrowed from Ben Thompson - Stratechery (link to article)

The camera is creepy AF. Everyone saw the demo of the man taking a video of what are suggested to be his kids and it looked, well, quite dystopian. While I do imagine that the Photos experience would be quite enjoyable, no one should be taking pictures of other people while wearing this thing, especially during a moment like this. We already learned the mistake with Google Glass a decade ago, so I was surprised to see Apple make this blunder.

Source: Every meme on Twitter.

What was underemphasized or missed

Apple's app & developer advantage. Apple's massive advantage of their existing developer base and apps, which will work from day 1 with minimal changes.

A new interaction paradigm has been invented. The size of new opportunities enabled by an entire new input mechanism - eye tracking & gestures. More on this later.

This was a developer launch. Keep in mind, this was only the developer launch. We have yet to see what the world's best product marketing team has done to make nerd helmets cool. In addition, developers still have a year to build apps for it.

Creating a new product category is one of the hardest (and most exciting) things you can do as a product designer, and you have to actively and continuously battle against the business pressures that fuel feature creep so that you can nail the foundations first and set yourself up for sustainable improvements.

This initial version was obviously going to be missing many key features. Keep in mind that when the iPhone was first released, it was missing really basic stuff like copy-and-paste, 3G connectivity, and multitasking. With this launch, Apple needed to solve the most difficult and crucially important problem first — nailing the fundamental interactions that define and constrain this platform. From all that I've seen so far from the people who've tried it, it seems Apple succeeded here once again, and I'm pumped to go build their killer app.

Where I see the money being made

Marketplaces for 3D content: Everyone in the gaming world sees where this is going. Micro-transactions for adding skins to your character, vehicles and weapons has taken over the gaming world. There will be people who want to virtually stylize their room, add art & decor, and even customize their own arms and hands, and people are used to paying for this type of stuff now. Not only that, app developers will also need a library of objects, materials, shaders and other 3D resources to jumpstart their projects. Whoever successfully facilitates the digital economy of 3D creative assets will do very well. While the big game engines like Unity and Unreal Engine have a notable head start, I expect the marketplace wars are inevitable. Grab your popcorn 🍿.

AR Development Tools: Getting computers to understand the physical environment around us is still a difficult problem that is not fully solved. Luckily, Apple has made a ton of advancements to ARKit and RealityKit over the years and made some new APIs available to developers. However, Apple will never be in a position to detect and classify every type of object developers may be interested in. I predict we will see multiple companies building tools, pre-trained ML models & SDKs to make this process even easier for developers. If I had to guess, it will be a few people from the self-driving car industry that are fed up with the pace of progress and want to jump onto the new shiny thing.

One challenge, however, is that the models will need to run locally to run with low enough latency and respond quickly to the continuously changing real world environment. Speaking from first hand experience, there is still a notable amount of work needed to bring algorithms you develop in Python to iOS or MacOS (which will be the same for VisionOS) and optimize them for Metal and Apple’s Neural Engine. This even applies to the common, out-of-the-box algorithms from libraries like PyTorch or TensorFlow, but I expect the open-source community (or even Apple themselves) will do this work for us.

3D Content Capture & Editing Tools: In addition to physical world understanding, we’ll obviously see an explosion in 3D content. This can either be done entirely using 3D design tools or by capturing an existing object or scene in the real world. I for one am most curious about the combination of the two, especially given what we’re seeing in 2D design tools like Generative Fill in Photoshop.

As far as capture goes, this used to be done using $35,000 AI-driven 3D scanners, but very soon we’ll be able to do this using the cameras & LiDAR on our iPhones, iPads and Vision Pro devices. In fact, just this week in WWDC we saw Apple release Object Capture APIs for iOS (API docs here). I’ll also be very curious to see if GoPro or any of the many drone companies to make a big move into this area.

On the software side, I expect we’ll see development and design tools that span both capture and editing in one seamless workflow. While that could be done by Apple through its new Reality Composer Pro tool, I believe we’ll see heavy competition in this space regardless.

What I’d like to see from Developers

I already have a list of over 30 ideas for new apps that continues to grow by the day, but I’ll name a few that I expect, or at least hope, to see released.

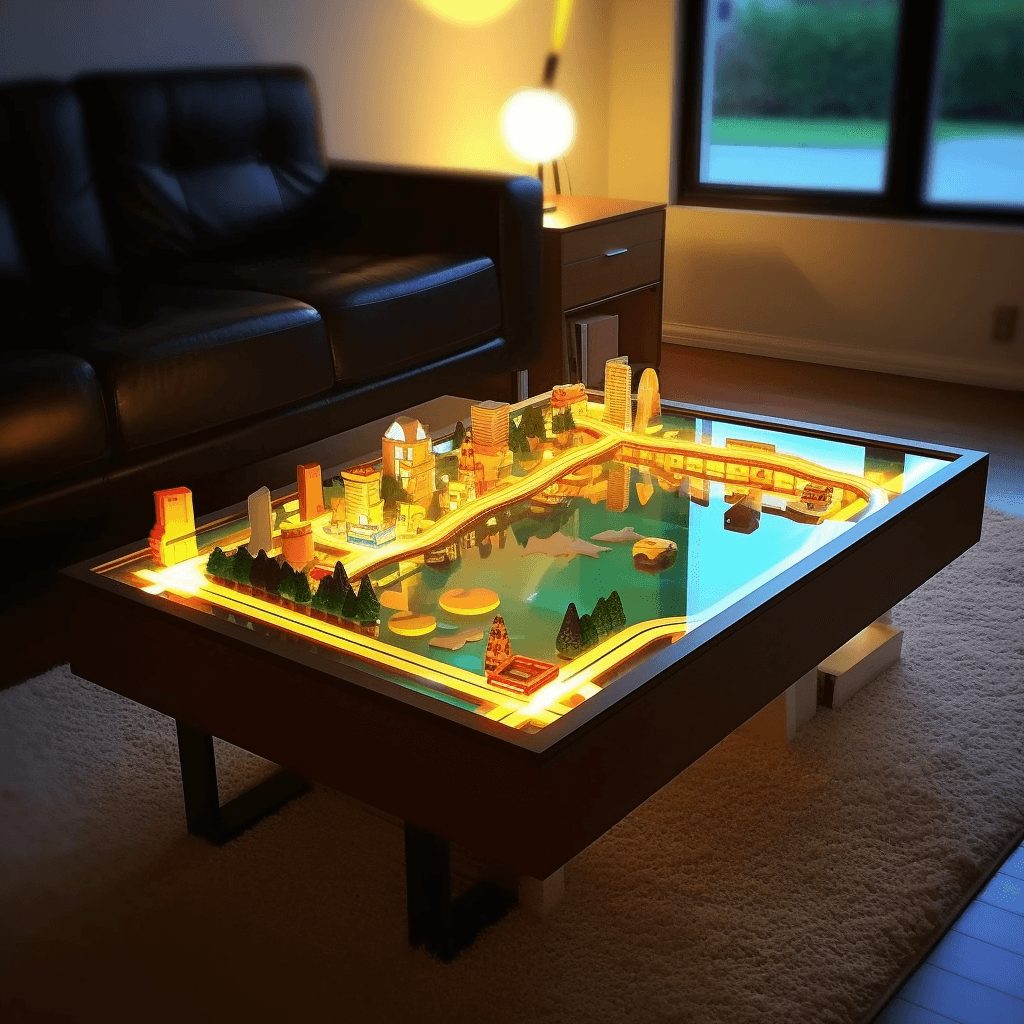

Lo-Fi Tabletop Games: We already have an established, growing market for VR games, but the AR gaming space is still wide open.

I’d love to see lightweight, whimsical, lo-fi games that I can play right on my coffee table or on my desk. I’m excited and curious to see what the makers of games like Monument Valley, Crossy Road and Untitled Goose Game can come up with for this new medium. To start, they should just replicate the simple game mechanics that exist in their games today replacing taps and swipes with pinches and hand gestures, but over time play with new ways to interact with the game using 3D manipulation and eye tracking.

Writing & Drawing: When I’m doing my most creative work, I do it in analog. I grab my pens, pencils, and markers and write stuff out on real paper, or on a whiteboard. The tactile nature from the contact of pen on paper simply cannot be replicated by touching plastic to glass.

If I’m wearing my Vision Pro, I want to be able to do the same, but have my work immediately digitized, divided into layers, and the words translated into text. I should also be able to draw virtual layers, that I can manipulate digitally, or use as a line that I can later trace with a real pen.

I imagine the flow having an onboarding process where you can scan in your writing utensils with your Vision Pro (which over time won’t be needed for new users as we’ll have already trained ML models to detect them automatically), and then virtually project a few fun & easy designs for you to trace on real paper. You should also be able to sketch out 3D objects on paper in 2D, and have algorithms translate them into 3D models, which you can then further manipulate virtually.

Virtual Windows with Amazing Views: When I’m in my office, I want to look out of my window and feel like I’m in an 80th floor penthouse apartment in Manhattan, or in a chalet in the Swiss Alps. Don’t have windows in your office? That’s fine, just draw virtual ones on your walls.

These are not static environments you’re looking at either, they’re live environments with lighting and weather that adjusts based on your time of day, and they simulate simple, randomized activity like planes flying over or cars honking (which you can tune out 🙂). On a video call? Have the view out your window adjust to that of your mate’s to feel just a tad more connected.

Immersive Movie Environments: I almost didn’t write this because it seems so obvious, but when you’re watching a movie, I want the environment around me to adjust to make me feel even more immersed in the scene. This already happens “by default” by the fact that Apple casts light from the screen onto the virtual environment around you, but the next level up from that would be to generate rain outside your windows when it’s raining in the movie, or project stars onto your ceiling when the current scene is set in space.

What I’m most excited about

A New Interaction Paradigm: Eyes & Gestures

As a product designer, I’m absolutely fired up about the chance to design for an entirely new interaction modality.

It’s worth repeating — interfaces can now respond to where the user is looking, and not just on a 2D screen, but also in the real world. They can also respond to different gestures users make with their hands, arms and head. If you haven’t seen it, watch the video demonstrating the new hand tracking capabilities of the Vision pro. The level of granularity available to developers is quite impressive.

This is going to open up new use cases and experiences that none of us are even imagining yet. In particular, I’m most excited about what we can now do with these inputs when a user’s typical input mechanism (her hands) are occupied typing, drawing, or perhaps playing an instrument.